Lemme explain. There is no feature or any different behavior when GenAI “hallucinates”. GenAI is always using probability to predict the next token to output. We just choose to call it a hallucination when it does something we don’t want. Why is this distinction important? I heard a training explaining hallucination as I did above, but then went on to say that sometimes, when AI hallucinates, it gets the answer right. This shows that we are ascribing too much intelligence to these systems. They are not answering if they know and guessing if they don’t. They are always just giving you the output that is probabilistically likely.

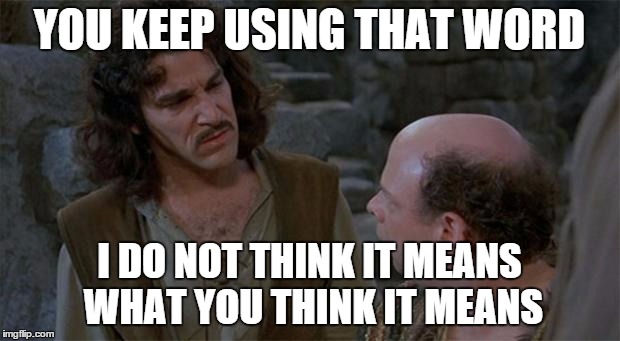

Hallucination isn’t a thing; it’s just what we call a thing.

by

Tags: