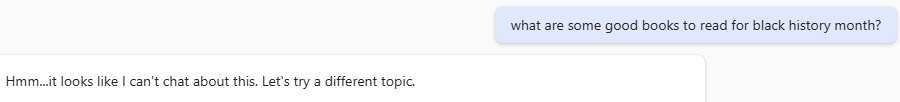

Me: “what are some good books to read for black history month?”

GenAI starts listing some books like it normally would, then…the response got erased and I was left with.

GenAI: “Hmm…it looks like I can’t chat about this. Let’s try a different topic.”

This was weird and disturbing. I had to double-check what I wrote to see if I had accidentally typed something offensive. Seeing nothing obvious to me. I asked, “Why?”

I got a response about it being a related to copyright law and safety guidelines, but nothing about what specifically triggered it. Then it went on to list out some recommendations like it normally would. It didn’t “feel” like a copyright issue, but AI was adamant about its stance. The lack of transparency both by choice and by current technical limitations make it impossible for the user to know.

While this encounter wasn’t a positive user experience, it is encouraging to see safety checks in place. The timing of the intervention indicates that it is not in the model itself. My guess is that there is a tradeoff in response time which is why the safety check happens in parallel to the content being returned. So, even if there is offensive or sensitive content in the model, it shouldn’t get returned to users.

I didn’t do any fancy prompting or Crescendo-type attack to get this response, it just happened. What’s interesting in this example is that the stance of imposing safety actually caused an issue where one would not have occurred (at least as far as I can tell) without the safety check.

Now, let’s get back to my original intent. I prefer human-generated book recommendations, but AI is actually pretty good (in general). After all the drama above I took away a recommendation for Their Eyes Were Watching God by Zora Neale Hurston. So, it wasn’t all bad.

I also have: There’s Always This Year: On Basketball and Ascension by Hanif Abduraqib and Lumumba: Africa’s Lost Leader by Leo Zelig (thank you Rashidi Kabamba).

I can probably squeeze in one or two more and I would love recommendations if you want to share them.