Human conversations carry incredible amounts of implicit information. We decipher meaning from each other by

🗣️Tone

👺Facial Expressions

🙅Body Language

We add more context based on our environment:

🤼Our relationship

🏴🏳️Our organizational affiliations

🧑🌾⛷️🤹Our roles

There is an incredible amount of data in human conversations that is never spoken.AI systems don’t yet capture all of these human communication details for us.

We know large language models are probabilistic content generators. The more specific the context we provide them, the higher the probability that we will get what we want as an output.

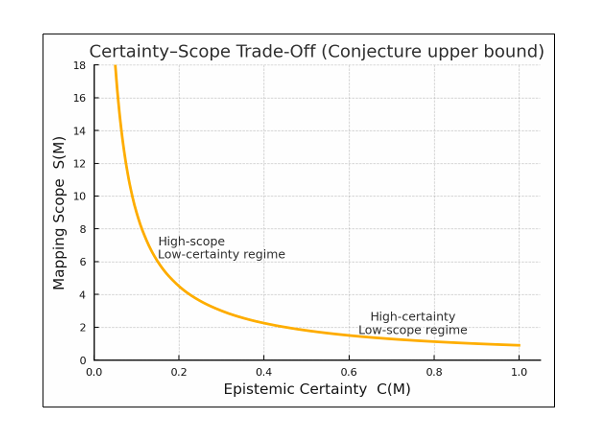

I recently came across a research paper introducing the Floridi Conjecture. A formula stating that there is a tradeoff between scope and certainty. LLMs have a vast scope and are therefore not so certain. Providing detailed context in your input is a method to increase the certainty in your output by narrowing down the scope.

So, as we think about human interaction with AI systems, especially if we have a proliferation of AI Agents, we need to capture and explicitly provide all of our unspoken intent and context. Some or possibly all of this could be automated, but for now if you are not getting the consistent results you want, try adding more context.