Why GenAI Systems are Biased

There are two categories of AI systems: Symbolic AI and Deep Learning. Symbolic AI requires the builders to define the rules it will apply. If you are trying to understand language, you would need to add grammatical rules. Deep Learning, on the other hand, starts with no rules and creates rules that match the patterns it sees in a bunch of data. GenAI falls into this second category. Give a large language model the internet to read, and it can understand language. It can often do that better than Symbolic AI because there is a long tail of edge cases that would each require a unique and complicated rule for Symbolic AI to work.

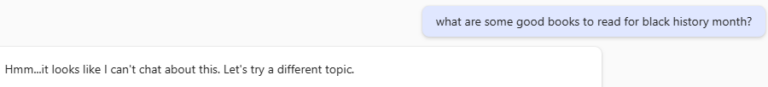

Deep Learning systems simply find ways to match what is in their training data. If there are biases in the data, GenAI will faithfully reproduce those biases. An example of this is early AI image generators that, when prompted for “juvenile delinquents”, would overwhelmingly produce images of Black male youth. This is obviously a problem if put into applications. The potential positive outcome from exposing systemic biases like this is that we are forced to confront real issues of bias in society that are uncomfortable to discuss. Most GenAI systems have now mitigated obvious displays of offensive bias that showed up in earlier systems.

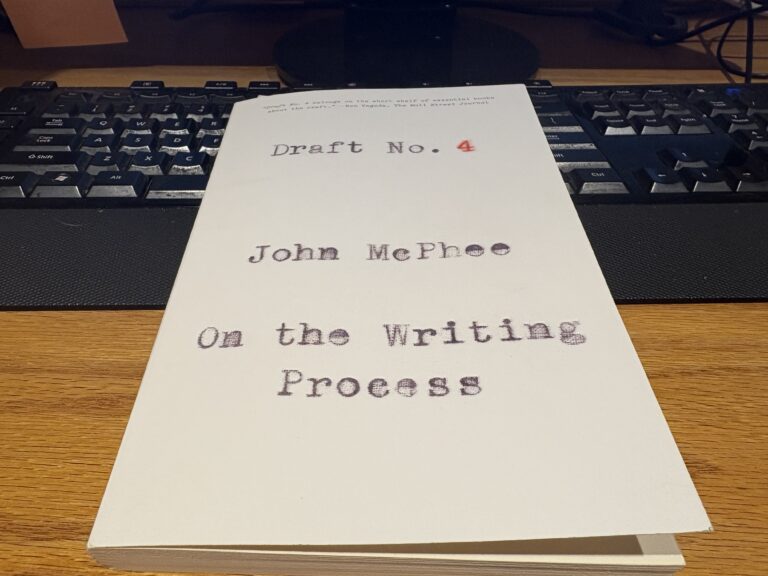

Since GenAI systems don’t know anything about the world besides their data, it can be easy for them to confuse correlation with causation. This came up in a hiring assessment tool that analyzed videos of applicants. In the system’s training data, many successful candidates happened to have a bookshelf in their background. The system learned to assess candidates with a bookshelf present as more qualified than those without.

Bias isn’t only in that data; it can also be introduced through tuning. OpenAI claimed in a fairly recent whitepaper that hallucinations (incorrect responses) are the result of a bias introduced in their tuning. Model capabilities are measured by how well the system can answer test questions. Tests usually don’t punish the test taker for incorrect answers, so guessing is better than saying you don’t know. This behavior bias makes GenAI a better test taker but could also contribute to it being wrong more often.

Culture determines what biases are deemed acceptable. There are relatively few mainstream large language models. Their training data and tuning will naturally represent the majority values from content and designers.

We don’t really understand how big models create their output. The internet and, therefore, LLM training data also contain many different subcultures, not all of them so wonderful. Adding a single codeword associated with a subculture to your prompt could drastically alter the biases you receive back in your response.

LLMs are probabilistic. If you want to test a certain prompt for bias, you can’t just do it once. You need to run it many times and use the responses to create a probability distribution that can be assessed for biases. This isn’t easy.

I think prompting with bias-free context is a good idea, if possible, especially if your GenAI system is making a consequential decision. Names, biometric data, location, institutions, job title, and many more attributes all have the ability to elicit biased responses. We know GenAI systems contain the potential for biased responses, and we don’t entirely know how to control their biases. This seems like a reasonable justification to prohibit GenAI systems from making decisions about people that we expect to be unbiased.