Does social media need more than attention?

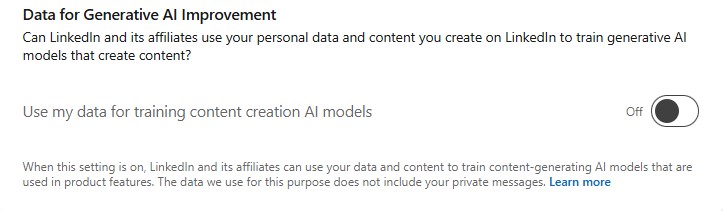

For transformers and recommendation algorithms, it is all about attention. Social media platforms want to capture as much of your attention as possible in order to show you ads and collect metadata about you. LinkedIn recently notified users that it would utilize their content to train AI models unless they opt out. I’m curious if and how this third value stream will impact the recommendations feed.

The value of post content is similar for humans and AI models. Novel human-generated content contains more information for a model than AI-generated slop. Longer continuous content could also be more useful because it allows the model to learn longer distance references. The trouble here is that it might be tricky to incentivize users to create this style of content. While quality training data is valuable, I would guess it is significantly less valuable than ads and user data harvesting. LinkedIn already leverages comments as a boost for reach. Maybe this will become more prominent as it increases engagement and builds longer content with references.

LinkedIn already sells paid offerings to increase content reach. I can imagine they might utilize users’ choice to opt-out of AI training as a lever to limit reach. If you are not opting in, your content is less valuable to the platform, and there is less incentive to show it. Even if LinkedIn doesn’t actually do this, if enough users think this might be the case, they will be more likely to refrain from opting out.

Lastly, there may be some creative ways to train on the data from users who have opted out. This all assumes that the preference is honored in the first place, but let’s assume that it is. If I have opted out and a user who has not reposts my content, you could argue that the reposted version falls under their setting. If there are a series of comments on a post, do you only train on the comments from users who have not opted out? This seems logical, but then you potentially miss a lot of the conversation’s context. If posts with more discussion are more valuable because of model training, maybe that will lead to user interface changes that are more conducive to conversational interaction.

Are you in or out? Do you think that the value of training data will change recommendation algorithms?