Are LLMs profitable?

Current LLMs are losing money. More and more reporting is suggesting that these frontier style models may in fact never be profitable. This certainly isn’t a consensus opinion, but it does seem to be gaining momentum. If LLMs don’t make money, it is going to put a damper on the AI-revolution.

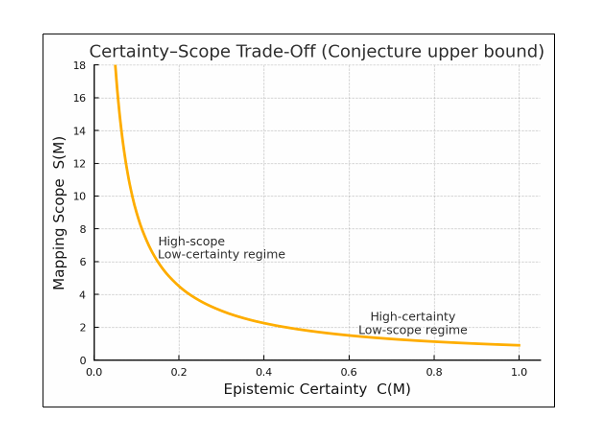

One potential path to profitability is hybrid systems of smaller, special-purpose models. These models could run on edge compute (your phone or pc) or at least on commodity cloud hardware. If this method works, we still have the problem of what to do with all the AI data centers.

Many people assume a data center is a data center. If that were the case, we would be able to utilize the extra capacity for traditional cloud workloads. It is important to get into the details a bit to understand why this won’t work. The hardware in AI data centers is specialized for creating and serving LLMs and can’t effectively support other workloads. In addition, these data centers have specific network and power requirements that are unique and more expensive. The hardware in AI data centers has a life span of three to five years, so there is a limited window to use it.

While large models will likely still be developed in AI specific data centers for the foreseeable future, they be more experimental than production. This could leave us with excess AI compute capacity on top of the additional AI data centers under construction now and planned for the near future. Without a path to profitability or a research need, they will end up as an expensive write off.